- KUBERNETES INSTALL APACHE SPARK ON KUBERNETES HOW TO

- KUBERNETES INSTALL APACHE SPARK ON KUBERNETES DRIVER

- KUBERNETES INSTALL APACHE SPARK ON KUBERNETES MANUAL

- KUBERNETES INSTALL APACHE SPARK ON KUBERNETES DOWNLOAD

The cluster-autoscaler add-on is based on the Kubernetes Cluster-Autoscaler project.įor scaling apps, check out the IBM Cloud documentation. With the cluster-autoscaler add-on, you can scale the worker pools in your IBM Cloud Kubernetes Service classic or VPC cluster automatically to increase or decrease the number of worker nodes in the worker pool based on the sizing needs of your scheduled workloads.

KUBERNETES INSTALL APACHE SPARK ON KUBERNETES DRIVER

The autoscaling of the pods and IBM Cloud Kubernetes Service cluster depends on the requests and limits you set on the Spark driver and executor pods. Note: To check the Kubernetes resources, logs etc., I would recommend IBM-kui, a hybrid command-line/UI development experience for cloud native development.

KUBERNETES INSTALL APACHE SPARK ON KUBERNETES DOWNLOAD

Following the steps, you should be able to download and add the kubeconfig configuration file for your cluster to your existing kubeconfig in ~/.kube/config or the last file in the KUBECONFIG environment variable.

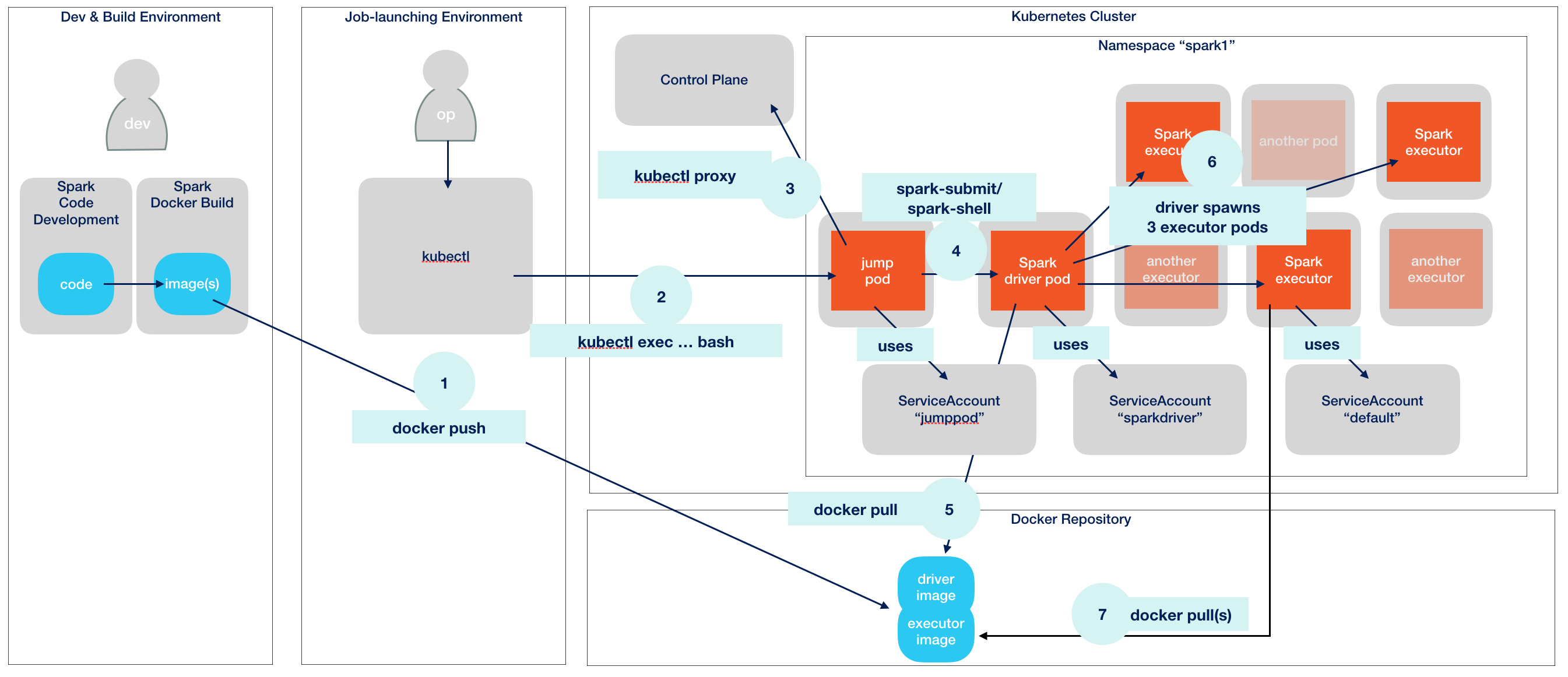

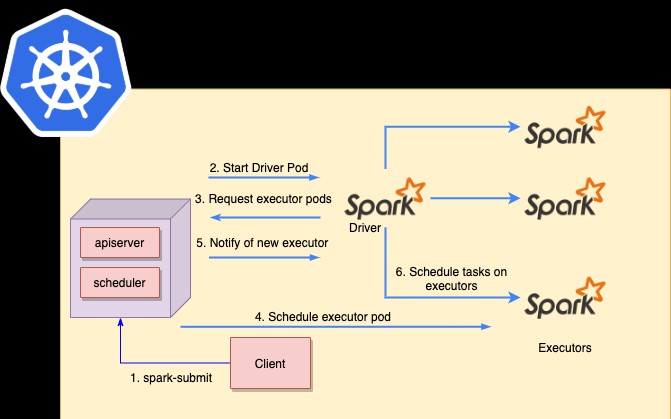

An IBM Cloud Container Registry with a namespace setupĬonfigure the IBM Cloud Kubernetes Service cluster.An standard IBM Cloud Kubernetes Service cluster.In short, you need three things to complete this journey: Install and setup an IBM Cloud Container Registry CLI and namespace.For autoscaling, set the Worker nodes per zone to one. Check the IBM Cloud Kubernetes Service documentation to create a cluster. A running Kubernetes cluster with access configured to it using kubectl.A runnable distribution of Spark 2.3 or above.Once the application completes, all the executor pods are terminated and the logs are persisted in the driver pod that remains in the completed state: While the application is running, the executor pods are terminated and new pods are created based on the load.The driver then creates executor pods that connect to the driver and execute application code.Apache Spark creates a driver pod with the requested CPU and Memory.The following occurs when you run your Python application on Spark: To understand how Spark works on Kubernetes, refer to the Spark documentation. As a certified Kubernetes provider, IBM Cloud Kubernetes Service provides intelligent scheduling, self-healing, horizontal scaling, service discovery and load balancing, automated rollouts and rollbacks, and secret and configuration management for your apps. IBM Cloud Kubernetes Service is a managed offering to create your own Kubernetes cluster of compute hosts to deploy and manage containerized apps on IBM Cloud.

KUBERNETES INSTALL APACHE SPARK ON KUBERNETES MANUAL

It offers management tools for deploying, automating, monitoring and scaling containerized apps with minimal-to-no manual intervention. Kubernetes is an open source platform for managing containerized workloads and services across multiple hosts. Quick intro to Kubernetes and the IBM Cloud Kubernetes Service It even includes APIs for programming languages that are popular among data analysts and data scientists, including Scala, Java, Python and R. It scales by distributing processing work across large clusters of computers, with built-in parallelism and fault tolerance. Spark's analytics engine processes data 10 to 100 times faster than alternatives. It is designed to deliver the computational speed, scalability and programmability required for Big Data - specifically for streaming data, graph data, machine learning and artificial intelligence (AI) applications.

What is Apache Spark?Īpache Spark (Spark) is an open source data-processing engine for large data sets. Let's begin by looking at the technologies involved.

KUBERNETES INSTALL APACHE SPARK ON KUBERNETES HOW TO

Learn how to set up Apache Spark on IBM Cloud Kubernetes Service by pushing the Spark container images to IBM Cloud Container Registry.